Please support our work with a donation!

- Go to MIT's Donation Form for the project.

- Enter a donation amount, then press CONTINUE to finalize.

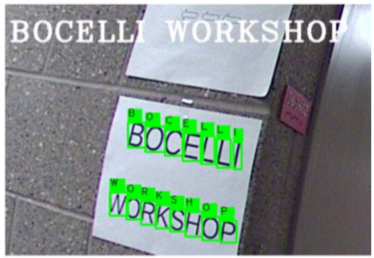

We are grateful to the Andrea Bocelli Foundation and to the MIT EECS Super-UROP program for their support of our research.